Azure HDInsight Performance Benchmarking: Interactive Query, Spark, and Presto

Fast SQL query processing at scale is often a key consideration for our customers. In this blog post we compare HDInsight Interactive Query, Spark, and Presto using the industry standard TPCDS benchmarks. These benchmarks are run using out of the box default HDInsight configurations, with no special optimizations. For customers wanting to run these benchmarks, please follow the easy to use steps outlined on GitHub.

Summary of the results

- HDInsight Interactive Query is faster than Spark.

- HDInsight Spark is faster than Presto.

- Text caching in Interactive Query, without converting data to ORC or Parquet, is equivalent to warm Spark performance.

- Interactive query is most suitable to run on large scale data as this was the only engine which could run all TPCDS 99 queries without any modifications at 100TB scale.

- Interactive Query preforms well with high concurrency.

About TPCDS

The TPC Benchmark DS (TPC-DS) is a decision support benchmark that models several generally applicable aspects of a decision support system, including queries and data maintenance. According to TPCDS, the benchmark provides a representative evaluation of performance as a general purpose decision support system. A benchmark result measures query response time in single user mode, query throughput in multi-user mode and data maintenance performance for a given hardware, operating system, and data processing system configuration under a controlled, complex, and multi-user decision support workload. The purpose of TPC benchmarks is to provide relevant, objective performance data to industry users. TPC-DS Version 2 enables emerging technologies, such as big data systems, to execute the benchmark. Please note that these are unaudited results.

HDInsight Interactive Query

HDInsight Interactive Query enables you to get super fast query results from your big data without ZERO ETL (Extract Transform & Load).

Interactive Query in HDInsight leverages (Hive on LLAP) intelligent caching, optimizations in core engines, as well as Azure optimizations to produce blazing-fast query results on remote cloud storage, such as Azure Blob and Azure Data Lake Store.

Comparative performance of Spark, Presto, and LLAP on HDInsight

We conducted these test using LLAP, Spark, and Presto against TPCDS data running in a higher scale Azure Blob storage account. These storage accounts now provide an increase upwards of 10x to Blob storage account scalability. Over last few months we have also contributed to improve the performance of Windows Azure Storage Driver (WASB,) which as a result has helped improve the performance for all HDInsight workloads.

We picked a common external Hive metastore, Azure SQL DB S2, so that various engines could go against the same data and metadata. To learn more, please review the steps to generate data and to run TPCDS queries.

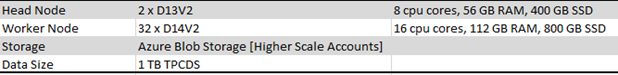

HDInsight configuration

For these tests, we used a similar cluster to run LLAP, Spark, and Presto.

Note: Tests were performed using the default out-of-the-box configurations resulting in no optimizations, no special settings, and no query change for any engine.

The table below uses 45 queries that ran on all engines successfully. As shown, LLAP was able to run many more queries than Presto or Spark.

As you can see with above run, LLAP with ORC is faster than all other engines. What’s an even more interesting observation is that LLAP with Text is also very fast. Even faster then Spark with Parquet file format.

Fast analytics on Hadoop have always come with one big catch, they require up-front conversion to a columnar format like ORC or parquet, which can be time consuming and expensive with on-demand computing. LLAP Dynamic Text Cache converts CSV or JSON data into LLAP’s optimized in-memory format on-the-fly. Caching is dynamic so the queries your users run determine what data is cached.

HDInsight Interactive Query(LLAP) architecture

LLAP also utilized cluster memory DRAM and SSD to provide better performance. Cache pool is a joint pool made up of cluster DRAM and SSD. To give you an example, with D14V2 VM’s in Azure you an get 112 GB of RAM and 800 GB of local SSD, so just a couple of nodes are good enough to keep over a terabyte of data in memory for fast query performance.

Text caching in Interactive Query

Text caching in Interactive Query is a very interesting concept which has caused us to think about big data pipelines very differently. Traditionally, after ingesting data in raw form we needed to convert the data to an optimized file format such as ORC, Parquet, or Avro, as these file formats ensured users would receive good performance while querying the big data. With text caching, raw text and json performance is very similar to ORC which eliminates the need for having additional steps in our big data pipeline, resulting in cost saving as well as faster and fresher query results.

Running Interactive Query on 100TB TPCDS data

As we see many benchmarks all over the web by different vendors, one thing we notice was that they focus on only a select set of queries where their respective engine will produce the best results. We decided to run all 99 queries at 100 TB scale, and only Interactive Query was able to run these unmodified. 41% of queries were returned under 30 seconds, and 71% of queries came back under 2 minutes. This benchmarks proves that Interactive query is fast, has rich SQL, and scales at much larger scale levels without any special efforts.

Concurrency

With the introduction of much improved fine-grain resource management and preemption, Interactive Query (Hive on LLAP) makes it easier for concurrent users. With Interactive Query, the only limit to concurrency is cluster resources. Cluster can be scaled to achieve higher and higher levels of concurrency.

We used number of different concurrency levels to test the concurrency performance. For the dataset, we again used 99 TPCDS queries on 1 TB data with 32 worker node cluster with max concurrency set to 32.

Test 1: Run all 99 queries, 1 at a time – Concurrency = 1

Test 2: Run all 99 queries, 2 at a time – Concurrency = 2

Test 3: Run all 99 queries, 4 at a time – Concurrency = 4

Test 4: Run all 99 queries, 8 at a time – Concurrency = 8

Test 5: Run all 99 queries, 16 at a time – Concurrency = 16

Test 6: Run all 99 queries, 32 at a time – Concurrency = 32

Test 7: Run all 99 queries, 64 at a time – Concurrency = 64

Results: As outlined in the above results, Interactive Query is a super optimized engine for running concurrent queries. The longest time to finish the workload was with single concurrent query.

Comparison with Hive and performance improvements over time

Its important that we compare Interactive Query (LLAP) performance with Hive. There has been a ton of work done to make Hive more performant in the community, as well as some of the work we have been doing to improve Windows Azure storage driver performance. Back in January 2017, it took 200 minutes to run the TPCDS workload with Hive 1.2, and with the storage driver improvements Hive can now run the benchmark in 137 minutes. With LLAP cached data, the benchmark completes in 49 minutes. These are impressive gains.

Integration with Power BI direct Query, Apache Zeppelin, and other tools

Power BI now allows you to connect directly to your HDInsight Interactive Query cluster to explore and monitor data without requiring a data model as an intermediate cache. This offers interactive exploration of your data and automatically refreshes the visuals without requiring a scheduled refresh. To learn more about how to get started, please watch the video HDInsight Interactive Query with Power BI.

HDInsight Interactive Query supports many end points. You can also use Apache Zeppelin , Visual Studio, Visual Studio Code, Hive View, and Beeline to run your queries

Summary

Azure HDInsight is a fully-managed, full spectrum, open-source analytics cloud service by Microsoft that makes it easy, fast, and cost-effective to process massive amounts of data. You can use the most popular open-source engines such as Hadoop, Spark, Hive, LLAP, Kafka, Storm, HBase, and R, and install more open source frameworks from the ecosystem. With Azure HDInsight, our mission is to provide a fully managed, full spectrum of open source technologies combined with the power of the cloud. Customers today are using these open source technologies to build a variety of different applications such as batch processing, ETL, data warehousing, machine learning, IoT, and more. The goal of this blog post is to share some of the intelligence on SQL query performance of various Open Source engines in the Azure HDInsight environment.

Do you have questions or comments? Please reach out to AskHDInsight@microsoft.com for more information.

Source: Azure Blog Feed