Event-driven analytics with Azure Data Lake Storage Gen2

Most modern-day businesses employ analytics pipelines for real-time and batch processing. A common characteristic of these pipelines is that data arrives at irregular intervals from diverse sources. This adds complexity in terms of having to orchestrate the pipeline such that data gets processed in a timely fashion.

The answer to these challenges lies in coming up with a decoupled event-driven pipeline using serverless components that responds to changes in data as they occur.

An integral part of any analytics pipeline is the data lake. Azure Data Lake Storage Gen2 provides secure, cost effective, and scalable storage for the structured, semi-structured, and unstructured data arriving from diverse sources. Azure Data Lake Storage Gen2’s performance, global availability, and partner ecosystem make it the platform of choice for analytics customers and partners around the world. Next comes the event processing aspect. With Azure Event Grid, a fully managed event routing service, Azure Functions, a serverless compute engine, and Azure Logic Apps, a serverless workflow orchestration engine, it is easy to perform event-based processing and workflows responding to the events in real-time.

Today, we’re very excited to announce that Azure Data Lake Storage Gen2 integration with Azure Event Grid is in preview! This means that Azure Data Lake Storage Gen2 can now generate events that can be consumed by Event Grid and routed to subscribers with webhooks, Azure Event Hubs, Azure Functions, and Logic Apps as endpoints. With this capability, individual changes to files and directories in Azure Data Lake Storage Gen2 can automatically be captured and made available to data engineers for creating rich big data analytics platforms that use event-driven architectures.

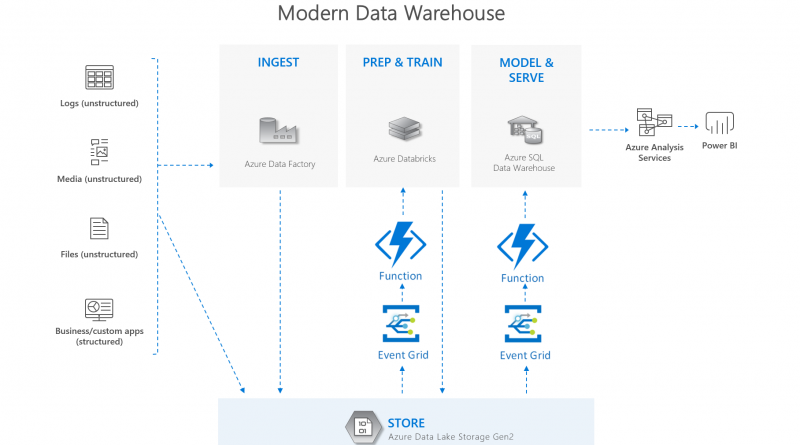

The diagram above shows a reference architecture for the modern data warehouse pipeline built on Azure Data Lake Storage Gen2 and Azure serverless components. Data from various sources lands in Azure Data Lake Storage Gen2 via Azure Data Factory and other data movement tools. Azure Data Lake Storage Gen2 generates events for new file creation, updates, renames, or deletes which are routed via Event Grid and Azure Function to Azure Databricks. A databricks job processes the file and writes the output back to Azure Data Lake Storage Gen2. When this happens, Azure Data Lake Storage Gen2 publishes a notification to Event Grid which invokes an Azure Function to copy data to Azure SQL Data Warehouse. Data is finally served via Azure Analysis Services and PowerBI.

The events that will be made available for Azure Data Lake Storage Gen2 are BlobCreated, BlobDeleted, BlobRenamed, DirectoryCreated, DirectoryDeleted, and DirectoryRenamed. Details on these events can be found in the documentation “Azure Event Grid event schema for Blob storage.”

Some key benefits include:

- Seamless integration to automate workflows enables customers to build an event-driven pipeline in minutes.

- Enable alerting with rapid reaction to creation, deletion, and renaming of files and directories. A myriad of scenarios would benefit from this – especially those associated with data governance and auditing. For example, alert and notify of all changes to high business impact data, set up email notifications for unexpected file deletions, as well as detect and act upon suspicious activity from an account.

- Eliminate the complexity and expense of polling services and integrate events coming from your data lake with third-party applications using webhooks such as billing and ticketing systems.

Next steps

Azure Data Lake Storage Gen2 Integration with Azure Event Grid is now available in West Central US and West US 2. Subscribing to Azure Data Lake Storage Gen2 events works the same as it does for Azure Storage accounts. To learn more, see the documentation “Reacting to Blob storage events.” We would love to hear more about your experiences with the preview and get your feedback at ADLSGen2QA@microsoft.com.

Source: Azure Blog Feed