Azure Data Explorer: Log and telemetry analytics benchmark

Azure Data Explorer (ADX), a component of Azure Synapse Analytics, is a highly scalable analytics service optimized for structured, semi-structured, and unstructured data. It provides users with an interactive query experience that unlocks insights from the ocean of ever-growing log and telemetry data. It is the perfect service to analyze high volumes of fresh and historical data in the cloud by using SQL or the Kusto Query Language (KQL), a powerful and user-friendly query language.

Azure Data Explorer is a key enabler for Microsoft’s own digital transformation. Virtually all Microsoft products and services use ADX in one way or another; this includes troubleshooting, diagnosis, monitoring, machine learning, and as a data platform for Azure services such as Azure Monitor, PlayFab, Sentinel, Microsoft 365 Defender, and many others. Microsoft’s customers and partners are using ADX for a large variety of scenarios from fleet management, manufacturing, security analytics solutions, package tracking and logistics, IoT device monitoring, financial transaction monitoring, and many other scenarios. Over the last years, the service has seen phenomenal growth and is now running on millions of Azure virtual machine cores.

Last year, the third generation of the Kusto engine (EngineV3) was released and is currently offered as a transparent, in-place upgrade to all users not already using the latest version. The new engine features a completely new implementation of the storage, cache, and query execution layers. As a result, performance has doubled or more in many mission-critical workloads.

Superior performance and cost-efficiency with Azure Data Explorer

To better help our users assess the performance of the new engine and cost advantages of ADX, we looked for an existing telemetry and logs benchmark that has the workload characteristics common to what we see with our users:

- Telemetry tables that contain structured, semi-structured, and unstructured data types.

- Records in the hundreds of billions to test massive scale.

- Queries that represent common diagnostic and monitoring scenarios.

As we did not find an existing benchmark to meet these needs, we collaborated with and sponsored GigaOm to create and run one. The new logs and telemetry benchmark is publicly available in this GitHub repo. This repository includes a data generator to generate datasets of 1GB, 1TB, and 100TB, as well as a set of 19 queries and a test driver to execute the benchmark.

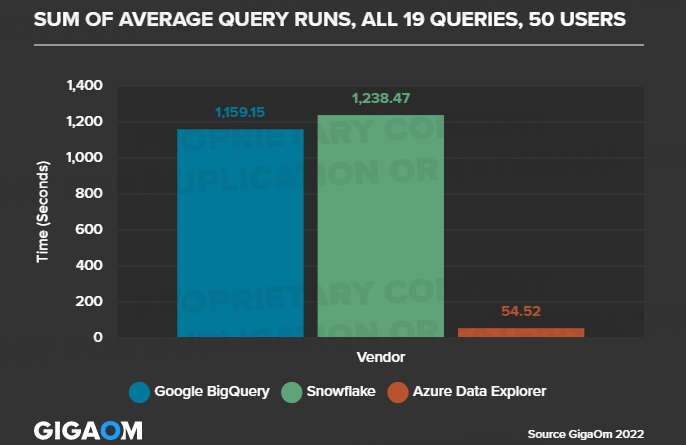

The results, now available in the GigaOm report, show that Azure Data Explorer provides superior performance at a significantly lower cost in both single and high-concurrency scenarios. For example, the following chart taken from the report displays the results of executing the benchmark while simulating 50 concurrent users:

Learn more

For further insights, we highly recommend reading the full report. And don’t just take our word for it. Use the Azure Data Explorer free offering to load your data and analyze it at extreme speed and unmatched productivity.

Check out our documentation to find out more about Azure Data Explorer and learn more about Azure Synapse Analytics. For deeper technical information, check out the new book Scalable Data Analytics with Azure Data Explorer by Jason Myerscough.

Source: Azure Blog Feed